Characteristics of the third generation of computers

The incorporation of integrated circuits made possible a new generation of computers with the following characteristics:

- Much smaller.

- They emanated less heat and therefore reduced cooling requirements.

- As they were smaller, they also required a reduced electrical energy consumption.

- The interconnections of the integrated circuits are much more reliable than the soldered connections generating more flexible computers.

- Minicomputers, cheaper and with greater processing capacity.

- Teleprocessing (processing of data from terminals in a central unit).

- Multiprogramming (a technique in which two or more processes are executed simultaneously by the CPU while housed in the main memory).

- Peripheral Renewal.

- The number π (Pi) was calculated to give more than five hundred thousand decimals.

History of the third generation of computers

The third generation of computers began to be created with the invention of integrated circuits better known as microchips.

In 1964, two physicists, Jack St. Claire Kilby and Robert Noyce were its creators and with it, they revolutionized the electronic industry and gave origin to the beginning of a high technology era. From this moment on, events were triggered that made history.

First, Intel’s hardworking physicist Ted Hoff invented the microprocessor. Then, thanks to George Gamow, a new way of programming emerged, and with the discovery of the structure of DNA, the Russian physicist and astronomer proposed that the sequence of it formed a code.

On April 7, 1964 IBM announced the S/360, designed by chief architect Gene Amdah. It was one of the first commercial computers to use integrated circuits. The 360 was considered one of the most important in history, as it influenced the design of computers in later years and marked the starting point for the third generation of computers.

It is a mainframe computer system designed to cover applications, regardless of their size or environment (scientific, commercial). This first group of machines built with integrated circuits was called the Edgar series, and could perform both numerical and administrative analysis or file processing. The models ranged in speed from 0.034 MIPS to 1.700 MIPS (50 times the speed) and between 8 KB and 8 MB of main memory. In the design, a clear distinction was made between architecture and implementation, allowing customers to buy a smaller system knowing that they could always migrate to a higher-capacity system, making it a resounding success in the marketplace.

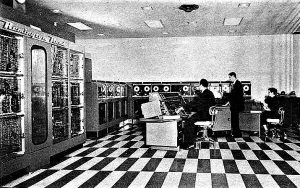

Control Data Corporation introduces to the market the CDC, a supercomputer capable of executing multiple instructions per second making it the most powerful at that time.

New storage units appear, 9-channel magnetic tapes, and although some still used punched cards for data entry, they had fast readers.

The company Digital Equipment Corporation DEC, foreseeing that IBM had monopolized an important sector of the market, decided to focus on making smaller computers, which were cheaper and easier to operate, reaching popularity. At the end of this technological era, the minicomputer emerged.

Computers size of the third generation of computers

With each invention, the space requirements required by a computer for its operation became less. First was the creation of transistors to process information that replaced the vacuum tubes and marked an era (second generation) considerably decreasing the size of computers by accommodating 200 transistors in the same space. Then, the integrated circuits better known as microchips were created.

Among the most important advantages of integrated circuits is their small size in relation to electronic circuits built with discrete components. By comparison, an integrated circuit can contain thousands to millions of transistors in just a few square millimeters, thus achieving the miniaturization of its components. Thanks to this, the computers reduced their dimensions, increased their operating capacity (faster), emitted less heat, becoming more efficient equipment.

Inventions

Initially computers handled a single function, either math or business, but never both.

The ideas and beginnings of the integrated circuit were given years before this generation. The composition of this small germanium device grouped six transistors that formed a rotating oscillator on the same semiconductor base. They were very economical because they were manufactured by printing them using photolithography in one piece, and also because they could be produced in series almost without defects. They were also very efficient because their energy consumption was considerably lower.

Thanks to integrated circuits, computer manufacturers gained more flexibility in the programs, and were even able to standardize their models.

Today we can see them in multiple electronic devices such as cell phones, clocks, television vehicles, etc.

Inventors of the third generation of computers

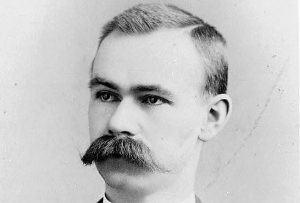

Here, we must highlight two of them, the winner of the Nobel Prize in Physics in 2000, Jack St. Clair Kilby, and the co-founder of Intel and Fairchild, Robert Norton Noyce, also known as “the Mayor of Silicon Valley“. The first one was responsible for developing the integrated circuit in 1959. For his part, Robert Norton Noyce, developed his own, only six months later, solving some of the problems presented by the model of Jack St. Clair Kilby.

Featured PCs of the third generation of computers

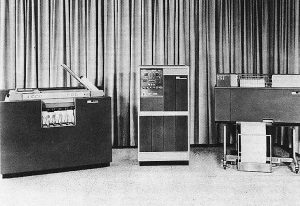

- IBM 360: without a doubt, the IBM 360 was the most relevant machines of this computer generation. Manufactured with integrated SLT technology. It caused such an impact that more than 30,000 units were manufactured. These computers were designed to cover applications regardless of their dimensions or use (scientific, commercial). It was a great success because users knew they could buy a small design that met their needs, and later incorporate a system with greater capacity. It marked a milestone in technology industry by influencing equipment designs in the following years. It was the first computer to be attacked by a virus in the history of computing.

- CDC 6600: with a 60-bit CPU, Control Data Corporation launches this computer designed by Seymour Cray, known as the first supercomputer in history. It had 10 peripheral processing units and was considered the most powerful and fastest computer in the world at the time, as it could execute millions of instructions per second with a performance of 1 megaflops. The first CDC 6600 was delivered to the European Organization and its use was mainly for research in high nuclear energy physics (CERN).

- DEC (Digital Equipment Corporation) PDP-8 (Programmed Data Processor): This was the first minicomputer. Its capacity is 16 bits and was conceived in Reading England.