Definition

Cache memory within informatics, is an electronic component that is found in both the hardware and software, it is responsible for storing recurring data to make it easily accessible and faster to requests generated by the system.

Cache memory is taken as a special buffer of the memory that all computers have, it performs similar functions as the main memory. One of the most recognized caches are internet browsers which maintain temporary downloads made from the internet to have available information for internal system.

Characteristics

- Allows you to quickly and organizationally have certain data, readings or files in common use, or data that have been recently added as downloads from the Internet.

- It presents an order system according to the importance of the file depending on the requirement of each operating system.

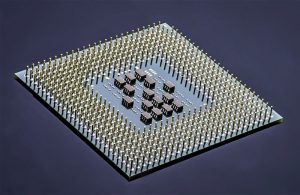

- It allows the processor to improve its performance and the results of the tasks by disposing its information as if it were a continuous use tool.

- Unwind internal processes of random storage memories, better known as RAM.

- Despite being an important part of the operating system, does not take up much space within the hardware or software.

What is cache memory for?

Within the cache memory system there are two main functions:

- The first is to keep organized by relevance, temporary files that are relevant to the system when completing tasks are required to the computer.

- The second task is to unburden part of the information stored in the main memory, so that, there will be no failures when it comes to exchange information due to content oversaturation.

History

Cache memory originated when the first computers, as far as memory is concerned, could no longer cover the processors’ needs, which were working at a higher speed than memory.

So, system engineers decided to incorporate a small auxiliary memory which would serve to assist the microprocessor thus reducing the waiting time for data recovery.

The term was born in England where it was given the name of “Cache” which translates as a hidden place to store information or contraband.

How a cache memory works

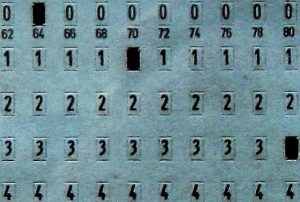

The first thing that the cache memory does is organize the information in different levels, these range from the smallest to the largest depending on how far away they are from the processor. It is organized in 3 levels to facilitate the processors work when collecting information.

When the processor needs information, it first resorts to the cache, where it searches for each level until it finds the required one. In case this is not the case, the processor looks for it in its internal memory and the cache saves data from the search to evaluate if it is necessary to incorporate it in the cache.

All levels within the cache have control centers where all the managed information is prepared and the higher the level (from 3rd to 1st) the greater the amount of information memory.

Types of cache memory

Within the cache memories there are 3 types that are most frequently used:

- Disk memory: which is part of RAM memory, this usually stores data that were recently read in order to speed up the loads within them.

- Cache Tracks: it is a solid composition memory of the RAM type, this one is found mostly in supercomputers, due to the high costs of its parts.

- Web Cache: this is responsible for saving web documents in order to reduce time consuming processes such as downloads, server overcrowding and broadband costs.

Speed

The speed within the cache memories is measured in “ns” which translates into nanoseconds, cache memory handles times between 15 and 35 ns of response to the processor, but this increases or decreases depending on the number of gigabytes and also, the RAM card marks where the cache is mainly found in computers.

Advantages

- Dissolves all data storage processes within the random access memory.

- Increases processor response speed when executing and responding to processes.

- In Internet areas, it allows you to access newly viewed or uploaded files without consuming data or extra loads to the broadband rent.

Disadvantages

When it is not correctly optimized, cache memory causes processors problems, hindering the tasks to be performed.

In some cases, cache memory fails when deleting its temporary files, overloading itself with information and slowing down all the information exchange processes between the cache and the main memory, affecting the computer completely.

Cache memory optimization

The cache memories must be optimized in order to reduce the failures in it known as “miss rate“, also reduce the penalty for failure of it, and finally, reduce the “hit time” when hit time is correct.

The most common failures due to card problems are:

- Forced failures where access occurs to an information block that is not in the cache.

- Because of a small capacity where the memory does not have enough space to cover the necessary blocks in a program execution.

- Because of the conflict between the blocks that are disorganized inside the memory.

The most used techniques to reduce cache memory failures are the following:

- Increasing the block sizes in order to avoid forced failures, however, in the long-term, it can present problems in conflicting failures.

- Increasing the associative method is another widely used method but ends up increasing the response time within the memory.

- Add a victim cache in order to store the blocks discarded due to capacity failures or conflict.

- Improve the information compiler so that it improves the data ordering system so that there are no conflicts between the different blocks.